from transformers import BertForSequenceClassification We are going to use BertForSequenceClassification, since we are trying to classify query and document pairs into two distinct classes (non-relevant, relevant). Val_dataset = Cord19Dataset(val_encodings, val_labels) Fine-tune the BERT model Train_dataset = Cord19Dataset(train_encodings, train_labels) Now that we have the encodings and the labels, we can create a Dataset object as described in the transformers webpage about custom datasets. Val_encodings = tokenizer(val_queries, val_docs, truncation=True, padding='max_length', max_length=128) Create a custom dataset Train_encodings = tokenizer(train_queries, train_docs, truncation=True, padding='max_length', max_length=128) from transformers import BertTokenizerFast We will use padding and truncation because the training routine expects all tensors within a batch to have the same dimensions. To do that, we need to chose which BERT model to use.

Train_queries, val_queries, train_docs, val_docs, train_labels, val_labels = train_test_split(Ĭreate a train and validation encodings. from sklearn.model_selection import train_test_split Even though we have more than 50 thousand data points when considering unique query and document pairs, I believe this specific case would benefit from cross-validation since it has only 50 queries containing relevance judgment. We are going to use a simple data split into train and validation sets for illustration purposes. training_data].groupby("label").count() Data split len(training_data.unique()) 50įor each query, we have a list of documents, divided between relevant ( label=1) and irrelevant ( label=0). For this exercise, we will use the query string to represent the query and the title string to represent the documents.

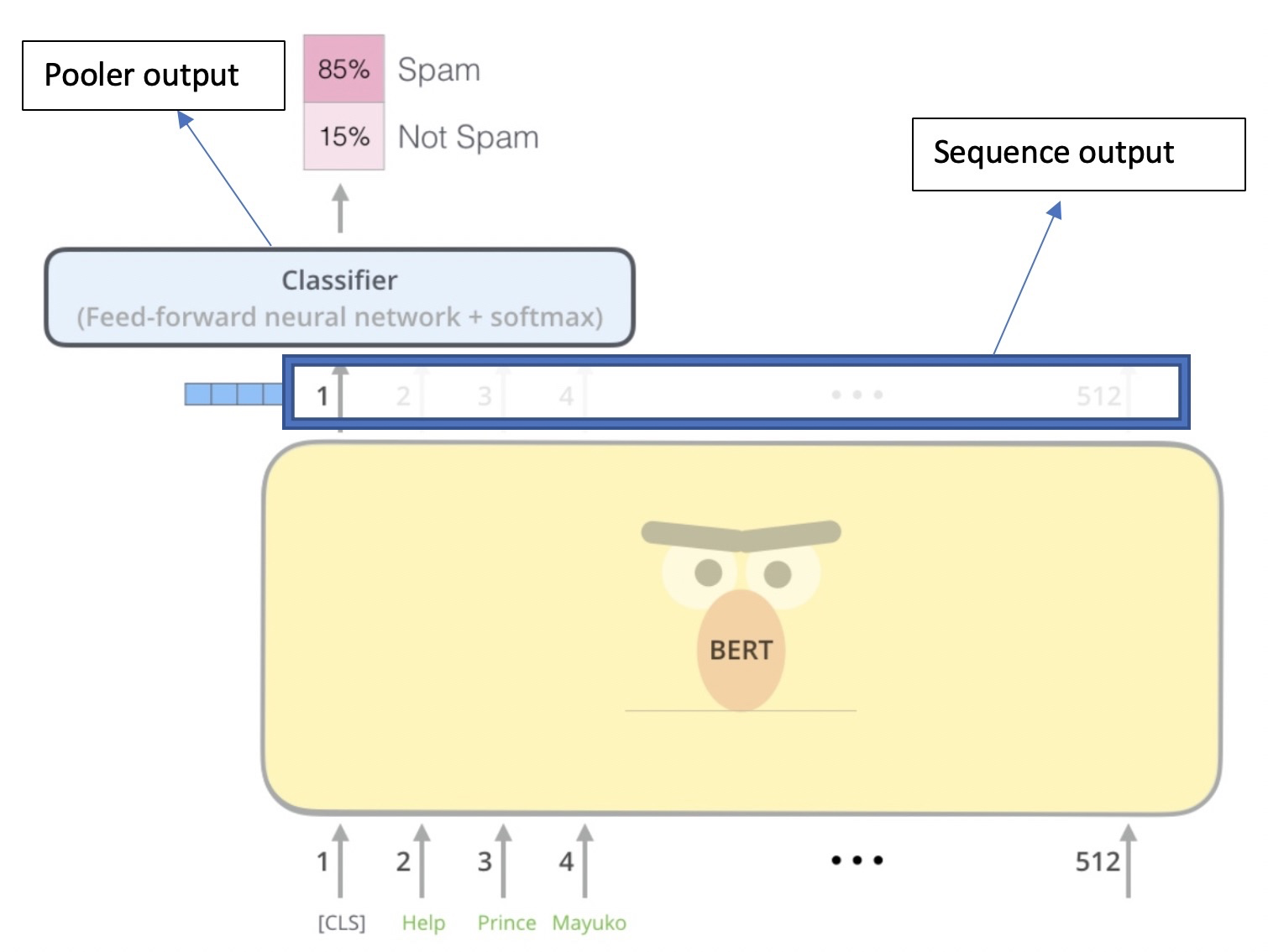

To fine-tune the BERT models for the cord19 application, we need to generate a set of query-document features and labels that indicate which documents are relevant for the specific queries.

#BERT FINETUNE INSTALL#

Install required libraries !pip install pandas transformers Load the dataset This work is one small piece of a larger project that is to build the cord19 search app. We use a dataset built from COVID-19 Open Research Dataset Challenge.

#BERT FINETUNE CODE#

You can run the code from Google Colab but do not forget to enable GPU support. It will cover the basics and introduce you to the amazing Trainer class from the transformers library. This post describes a simple way to get started with fine-tuning transformer models.

Setup a custom Dataset, fine-tune BERT with Transformers Trainer, and export the model via ONNX